10 Best Electric Cars of 2026

These are the best new and used electric vehicles on the market for 2026.

These are the best new and used electric vehicles on the market for 2026.

Find the best used Toyota Tundra years, trims, reliability concerns, model-year changes, towing capacity, and which generations to buy or avoid.

Learn the pros, cons, risks, and inspection tips for buying a low-mileage used car, including odometer checks, maintenance history, and scam prevention.

A used Jeep Wrangler can be anything from a rudimentary weekend toy to a sophisticated off-roader loaded with modern features.

This guide explains how inspections work, modern tools that simplify the process, and what you must check especially if you’re considering an electric vehicle (EV) or a tech-heavy model.

Used-car prices remain steady in 2026, but tight inventory and high loan rates make timing important. Here’s what buyers should know before purchasing a used car.

Temporary car insurance is not usually available, so most drivers need a standard policy, added-driver coverage, or rental insurance, depending on the situation.

Used Kia EV6 buying guide covering the best model years, key features, battery life, charging, pricing, and what to know before you buy.

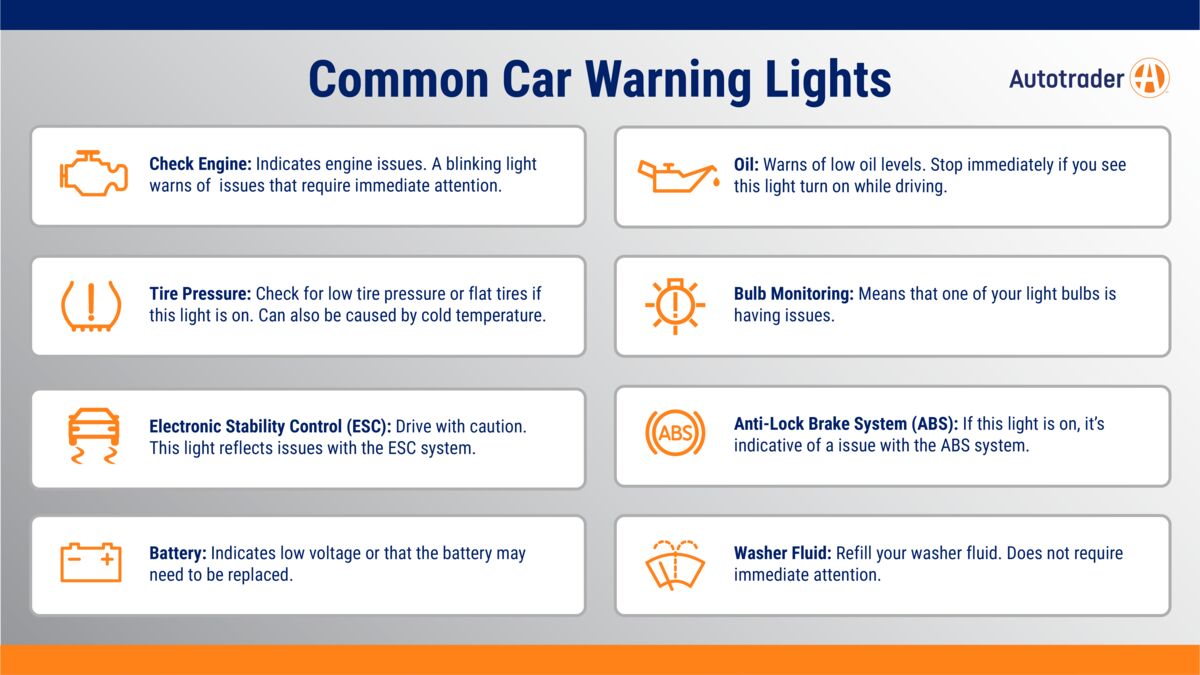

Understand what your car’s dashboard warning lights mean, from check engine and ABS to tire pressure and hood open alerts.

Tire warranties can be worth it, but only some are worth paying extra for. Learn which tire warranties matter, what they cover, and typical price ranges.